AI-Supported Decision Making

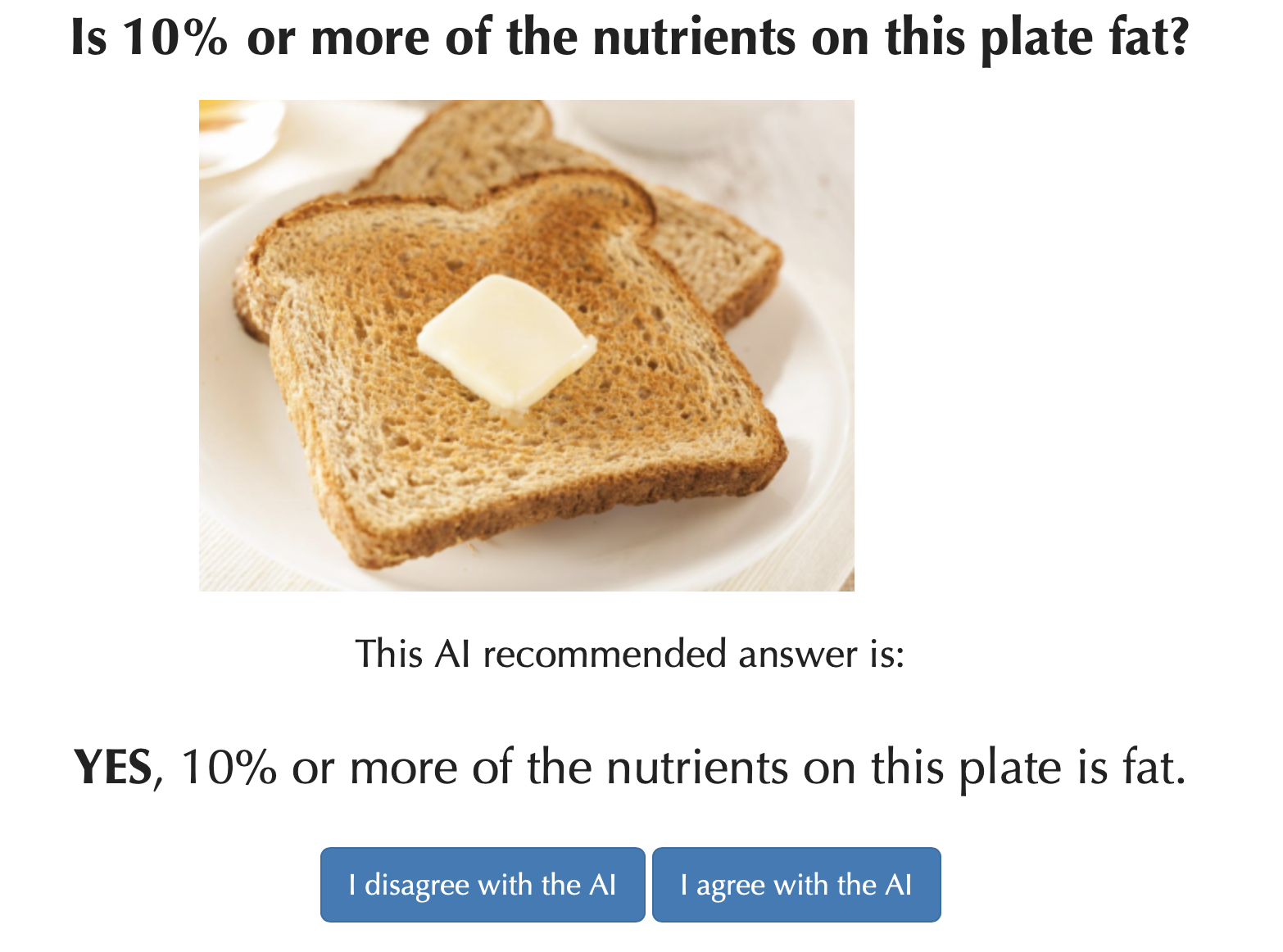

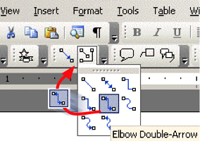

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.

Our results also suggest that the limitations of contemporary explainable AI solutions are not appreciated because the most commonly-used methods for evaluating AI-powered decision support systems are likely to produce misleading (overly optimistic) results.

Zana Buçinca, Maja Barbara Malaya, and Krzysztof Z. Gajos. To Trust or to Think: Cognitive Forcing Functions Can Reduce Overreliance on AI in AI-assisted Decision-making. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), 2021.

[Abstract, BibTeX, etc.]

Maia Jacobs, Jeffrey He, Melanie F. Pradier, Barbara Lam, Andrew C. Ahn, Thomas H. McCoy, Roy H. Perlis, Finale Doshi-Velez, and Krzysztof Z. Gajos. Designing AI for Trust and Collaboration in Time-Constrained Medical Decisions: A Sociotechnical Lens. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 2021. Association for Computing Machinery.

[Abstract, BibTeX, Blog post, etc.]

Maia Jacobs, Melanie F. Pradier, Thomas H. McCoy Jr, Roy H. Perlis, Finale Doshi-Velez, and Krzysztof Z. Gajos. How machine-learning recommendations influence clinician treatment selections: the example of the antidepressant selection. Translational Psychiatry, 11, 2021.

[Abstract, BibTeX, Blog post, etc.]

Zana Buçinca, Phoebe Lin, Krzysztof Z. Gajos, and Elena L. Glassman. Proxy Tasks and Subjective Measures Can Be Misleading in Evaluating Explainable AI Systems. In Proceedings of the 25th International Conference on Intelligent User Interfaces. ACM, 2020. To appear.

[Abstract, BibTeX, etc.]

Quantifying Motor Impairments

Healthcare, clinical trials, and research related to neurological disease all require tools for accurately and objectively measuring motor impairments.

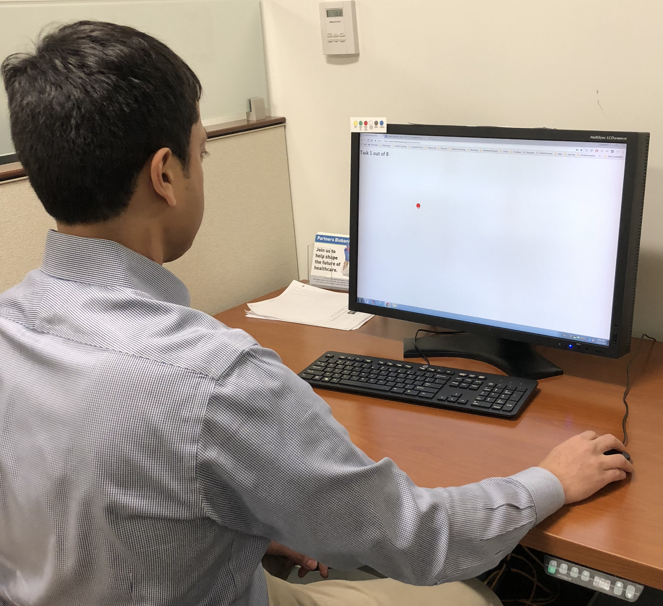

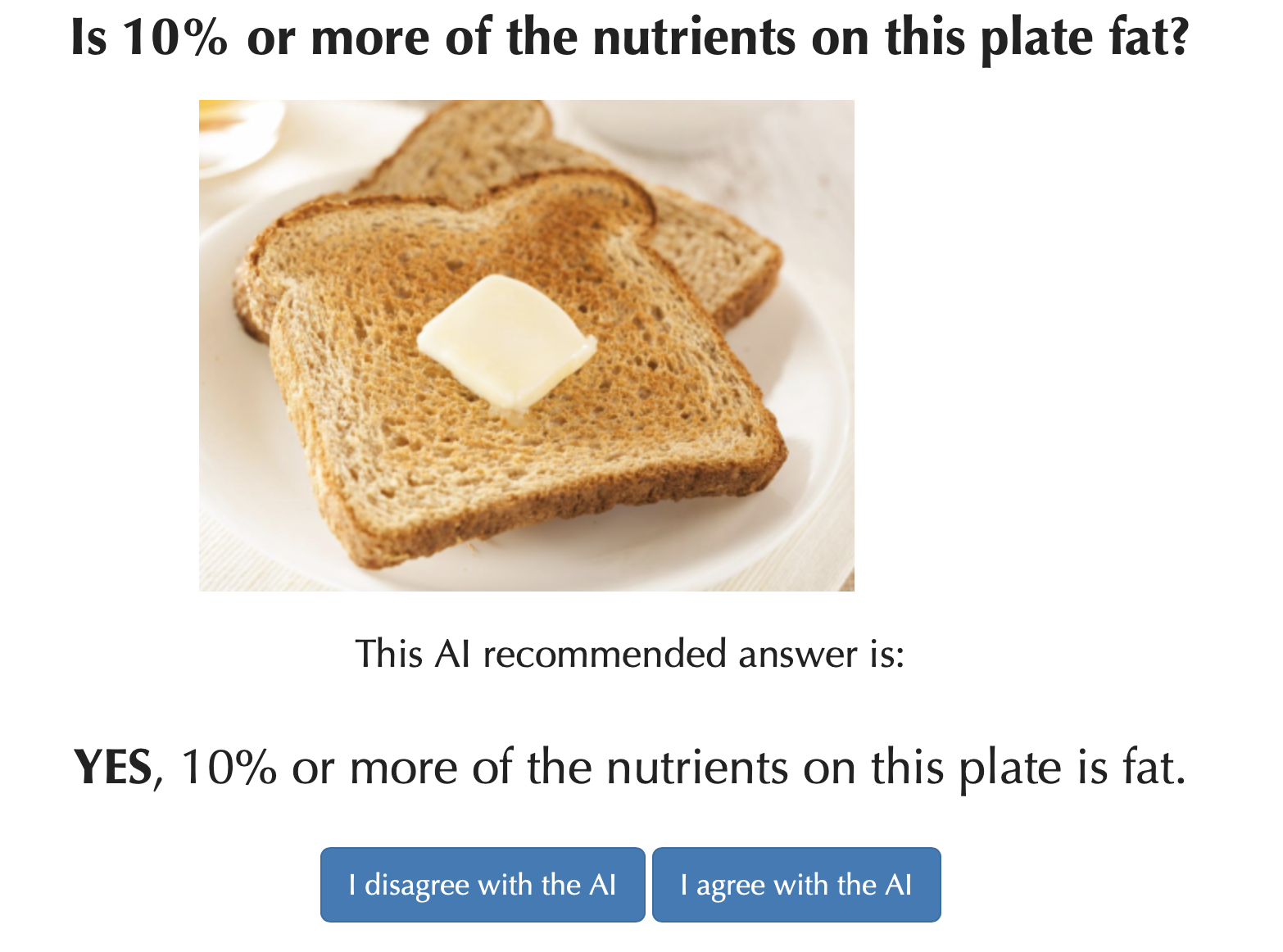

Our first tool, called Hevelius, measures motor impairment in the dominant arm based on a person's performance on a simple computer mouse-based task. We are working on other tools as well as on ways to make accurate measurements possible at home without help from clinician. Such at-home measurements can enable granular longitudinal measurements of disease progresson as well as large-scale assessments. This project is done in collaboration with the Laboratory for Deep Neurophenotyping at Massachusetts General Hospital.

Healthcare, clinical trials, and research related to neurological disease all require tools for accurately and objectively measuring motor impairments.

Our first tool, called Hevelius, measures motor impairment in the dominant arm based on a person's performance on a simple computer mouse-based task. We are working on other tools as well as on ways to make accurate measurements possible at home without help from clinician. Such at-home measurements can enable granular longitudinal measurements of disease progresson as well as large-scale assessments. This project is done in collaboration with the Laboratory for Deep Neurophenotyping at Massachusetts General Hospital.

Nergis C Khan, Vineet Pandey, Krzysztof Z Gajos, and Anoopum S Gupta. Free-Living Motor Activity Monitoring in Ataxia-Telangiectasia. The Cerebellum, pages 1-12, 2021.

[Abstract, BibTeX, etc.]

Krzysztof Z. Gajos, Katharina Reinecke, Mary Donovan, Christopher D. Stephen, Albert Y. Hung, Jeremy D. Schmahmann, and Anoopum S. Gupta. Computer Mouse Use Captures Ataxia and Parkinsonism, Enabling Accurate Measurement and Detection. Movement Disorders, 35:354–358, February 2020.

[Abstract, BibTeX, etc.]

Improving Care Coordination in Complex Healthcare

Children with complex health conditions require care from a large, diverse team of caregivers that includes multiple types of medical professionals, parents and community support organizations. Coordination of their outpatient care, essential for good outcomes, presents major challenges. Our formative studies revealed that the nature of teamwork in complex care poses challenges to team coordination that extend beyond those identified in prior work and that can be handled by existing coordination systems. We are building on a computational theory of teamwork to create entirely new tools to support complex, loosely-coupled teamwork.

Children with complex health conditions require care from a large, diverse team of caregivers that includes multiple types of medical professionals, parents and community support organizations. Coordination of their outpatient care, essential for good outcomes, presents major challenges. Our formative studies revealed that the nature of teamwork in complex care poses challenges to team coordination that extend beyond those identified in prior work and that can be handled by existing coordination systems. We are building on a computational theory of teamwork to create entirely new tools to support complex, loosely-coupled teamwork.

Maia Jacobs, Galina Gheihman, Krzysztof Z. Gajos, and Anoopum S. Gupta. "I Think We Know More Than Our Doctors": How Primary Caregivers Manage Care Teams with Limited Disease-related Expertise. Proc. ACM Hum.-Comput. Interact., 3(CSCW):159:1–159:22, November 2019.

[Abstract, BibTeX, Blog post, etc.]

Ofra Amir, Barbara J. Grosz, Krzysztof Z. Gajos, and Limor Gultchin. Personalized change awareness: Reducing information overload in loosely-coupled teamwork. Artificial Intelligence, 275:204 – 233, 2019.

[Abstract, BibTeX, etc.]

Ofra Amir, Barbara Grosz, and Krzysztof Z. Gajos. Mutual Influence Potential Networks: Enabling Information Sharing in Loosely-Coupled Extended-Duration Teamwork. In Proceedings of IJCAI'16, 2016.

[Abstract, BibTeX, etc.]

Ofra Amir, Barbara J. Grosz, Krzysztof Z. Gajos, Sonja M. Swenson, and Lee M. Sanders. From Care Plans to Care Coordination: Opportunities for Computer Support of Teamwork in Complex Healthcare. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems, CHI '15, pages 1419-1428, New York, NY, USA, 2015. ACM. Honorable Mention

[Abstract, BibTeX, etc.]

Ofra Amir, Barbara J. Grosz, Krzysztof Z. Gajos, Sonja M. Swenson, and Lee M. Sanders. AI Support of Teamwork for Coordinated Care of Children with Complex Conditions. In AAAI Fall Symposium: Expanding the Boundaries of Health Informatics Using AI: Making Personalized and Participatory Medicine A Reality, 2014.

[Abstract, BibTeX, etc.]

Lab in the Wild

Lab in the Wild is a platform for conducting large scale behavioral experiments with unpaid online volunteers. LabintheWild helps make empirical research in Human-Computer Interaction more reliable (by making it possible to recruit many more participants than would be possible in conventional laboratory studies) and more generalizable (by enabling access to very diverse groups of participants).

Lab in the Wild is a platform for conducting large scale behavioral experiments with unpaid online volunteers. LabintheWild helps make empirical research in Human-Computer Interaction more reliable (by making it possible to recruit many more participants than would be possible in conventional laboratory studies) and more generalizable (by enabling access to very diverse groups of participants).

LabintheWild experiments typically attract thousands or tens of thousands of participants (with two studies reaching more than 250,000 people). LabintheWild's volunteer participants have also been shown to provide more reliable data and exert themselves more than participants recruited via paid platforms (like Amazon Mechanical Turk). A key characteristic of LabintheWild is its incentive structure: Instead of money, participants are rewarded with information about their performance and an ability to compare themselves to others. This design choice engages curiosity and enables social comparison---both of which motivate participants.

LabintheWild is co-directed by Profs. Katharina Reinecke and Krzysztof Gajos.

Here's the original LabintheWild paper that demonstrates that the data obtained on LabintheWild are are as reliable as those captured in traditional experiments:

Katharina Reinecke and Krzysztof Z. Gajos. LabintheWild: Conducting Large-Scale Online Experiments With Uncompensated Samples. In Proceedings of CSCW'15, 2015.

Honorable Mention

[Abstract, BibTeX, etc.]

Here are some papers that relied on the data collected on Lab in the Wild:

Bernd Huber and Krzysztof Z. Gajos. Conducting online virtual environment experiments with uncompensated, unsupervised samples. PLOS ONE, 15(1):1–17, 01 2020.

[Abstract, BibTeX, Data, etc.]

Krzysztof Z. Gajos, Katharina Reinecke, Mary Donovan, Christopher D. Stephen, Albert Y. Hung, Jeremy D. Schmahmann, and Anoopum S. Gupta. Computer Mouse Use Captures Ataxia and Parkinsonism, Enabling Accurate Measurement and Detection. Movement Disorders, 35:354–358, February 2020.

[Abstract, BibTeX, etc.]

Qisheng Li, Krzysztof Z. Gajos, and Katharina Reinecke. Volunteer-Based Online Studies With Older Adults and People with Disabilities. In Proceedings of the 20th International ACM SIGACCESS Conference on Computers and Accessibility, ASSETS '18, pages 229–241, New York, NY, USA, 2018. ACM.

[Abstract, BibTeX, etc.]

Marissa Burgermaster, Krzysztof Z. Gajos, Patricia Davidson, and Lena Mamykina. The Role of Explanations in Casual Observational Learning about Nutrition. In Proceedings of CHI'17, 2017. To appear.

[Abstract, BibTeX, etc.]

Krzysztof Z. Gajos and Krysta Chauncey. The Influence of Personality Traits and Cognitive Load on the Use of Adaptive User Interfaces. In Proceedings of ACM IUI'17, 2017. To appear.

[Abstract, BibTeX, etc.]

Bernd Huber, Katharina Reinecke, and Krzysztof Z. Gajos. The Effect of Performance Feedback on Social Media Sharing at Volunteer-Based Online Experiment Platforms. In Proceedings of CHI'17, 2017. To appear.

[Abstract, BibTeX, Data, etc.]

Katharina Reinecke and Krzysztof Z. Gajos. Quantifying Visual Preferences Around the World. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '14, pages 11-20, New York, NY, USA, 2014. ACM.

[Abstract, BibTeX, etc.]

Katharina Reinecke, Tom Yeh, Luke Miratrix, Rahmatri Mardiko, Yuechen Zhao, Jenny Liu, and Krzysztof Z. Gajos. Predicting users' first impressions of website aesthetics with a quantification of perceived visual complexity and colorfulness. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '13, pages 2049-2058, New York, NY, USA, 2013. ACM.

Honorable Mention

[Abstract, BibTeX, Data, etc.]

DERBI: Communicating Individual Biomonitoring and Personal Exposure Results to Study Participants

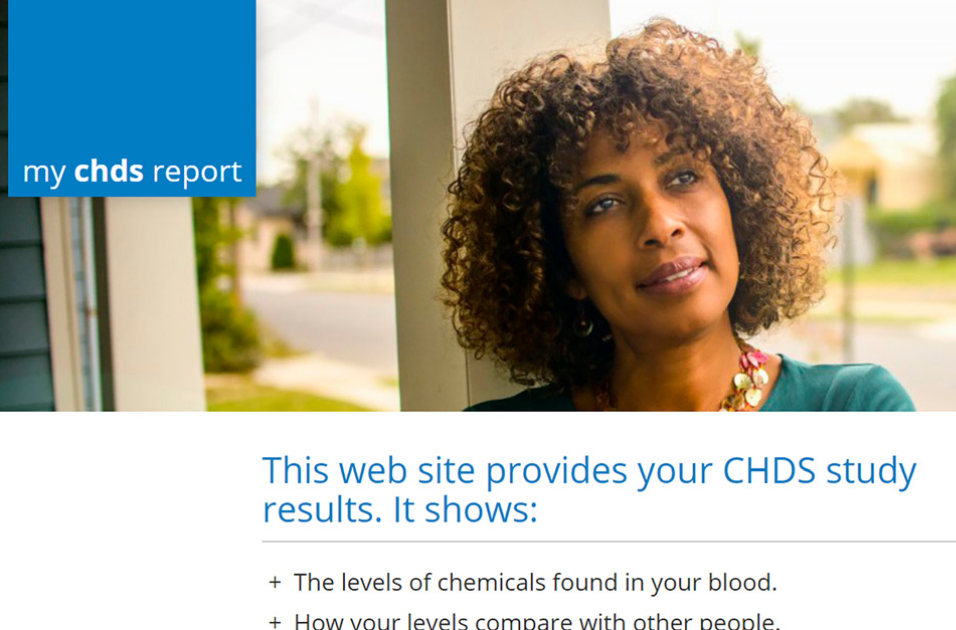

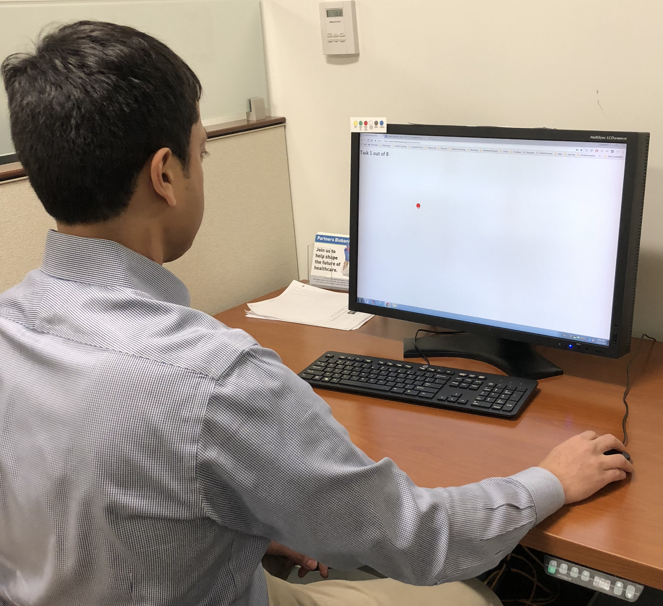

Epidemiologic studies and public health biomonitoring rely on chemical exposure measurements in blood, urine, and other tissues, and in personal environments, such as homes. For many chemicals, the health implications of individual results are uncertain, and the sources and strategies to reduce exposure may not be known. Yet, a growing number of researchers consider it their ethical obligation to report the results back to their participants. In a project led by the Silent Spring Institute, we are building scalable online tools to help researchers communicate personalized results to study participants in a manner that appropriately conveys what is and what is not known about the sources and effects of different environmental chemicals.

Epidemiologic studies and public health biomonitoring rely on chemical exposure measurements in blood, urine, and other tissues, and in personal environments, such as homes. For many chemicals, the health implications of individual results are uncertain, and the sources and strategies to reduce exposure may not be known. Yet, a growing number of researchers consider it their ethical obligation to report the results back to their participants. In a project led by the Silent Spring Institute, we are building scalable online tools to help researchers communicate personalized results to study participants in a manner that appropriately conveys what is and what is not known about the sources and effects of different environmental chemicals.

Julia Green Brody, Piera M Cirillo, Katherine E Boronow, Laurie Havas, Marj Plumb, Herbert P Susmann, Krzysztof Z Gajos, and Barbara A Cohn. Outcomes from returning individual versus only study-wide biomonitoring results in an environmental exposure study using the digital exposure report-back interface (DERBI). Environmental health perspectives, 129(11):117005, 2021.

[Abstract, BibTeX, etc.]

Katherine E. Boronow, Herbert P. Susmann, Krzysztof Z. Gajos, Ruthann A. Rudel, Kenneth C. Arnold, Phil Brown, Rachel Morello-Frosch, Laurie Havas, and Julia Green Brody. DERBI: A Digital Method to Help Researchers Offer ``Right-to-Know'' Personal Exposure Results. Environmental Health Perspectives, 125(2), February 2017.

[Abstract, BibTeX, etc.]

Enhancing Parent-Child Interaction Therapy

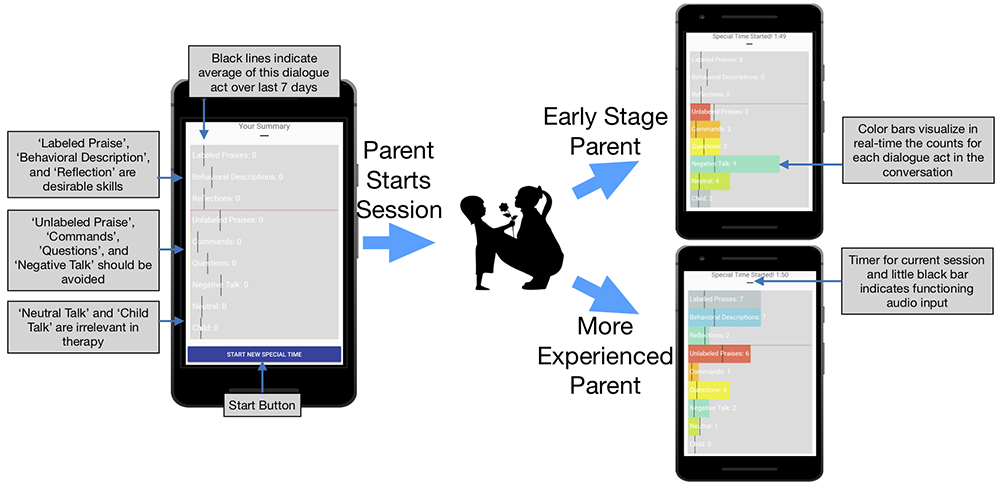

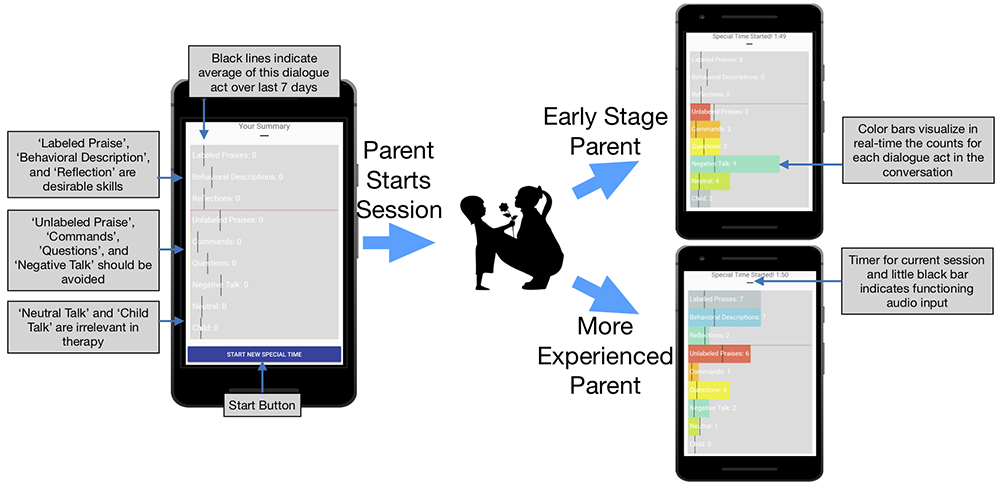

Parent-child interaction therapy (PCIT) helps parents improve the quality of interaction with children who have behavior problems. The therapy trains parents to use effective dialogue acts when interacting with their children. Besides weekly coaching by therapists, the therapy relies on deliberate practice of skills by parents in their homes. We developed SpecialTime, a system that provides parents engaged in PCIT with automatic, real-time feedback on their dialogue act use. The results of a one-month pilot study with parents enrolled in PCIT suggest that automatic feedback on spoken dialogue acts is possible in PCIT, and that parents find the automatic feedback useful. We are currently conducting a larger evaluation of SpecialTime's impact on the effectiveness of the therapy. This work is co-led by Prof. Elizabeth Brestan-Knight.

Parent-child interaction therapy (PCIT) helps parents improve the quality of interaction with children who have behavior problems. The therapy trains parents to use effective dialogue acts when interacting with their children. Besides weekly coaching by therapists, the therapy relies on deliberate practice of skills by parents in their homes. We developed SpecialTime, a system that provides parents engaged in PCIT with automatic, real-time feedback on their dialogue act use. The results of a one-month pilot study with parents enrolled in PCIT suggest that automatic feedback on spoken dialogue acts is possible in PCIT, and that parents find the automatic feedback useful. We are currently conducting a larger evaluation of SpecialTime's impact on the effectiveness of the therapy. This work is co-led by Prof. Elizabeth Brestan-Knight.

Bernd Huber, Richard F. Davis, III, Allison Cotter, Emily Junkin, Mindy Yard, Stuart Shieber, Elizabeth Brestan-Knight, and Krzysztof Z. Gajos. SpecialTime: Automatically Detecting Dialogue Acts from Speech to Support Parent-Child Interaction Therapy. In Proceedings of the 13th EAI International Conference on Pervasive Computing Technologies for Healthcare, PervasiveHealth'19, pages 139–148, New York, NY, USA, 2019. ACM.

[Abstract, BibTeX, Data, etc.]

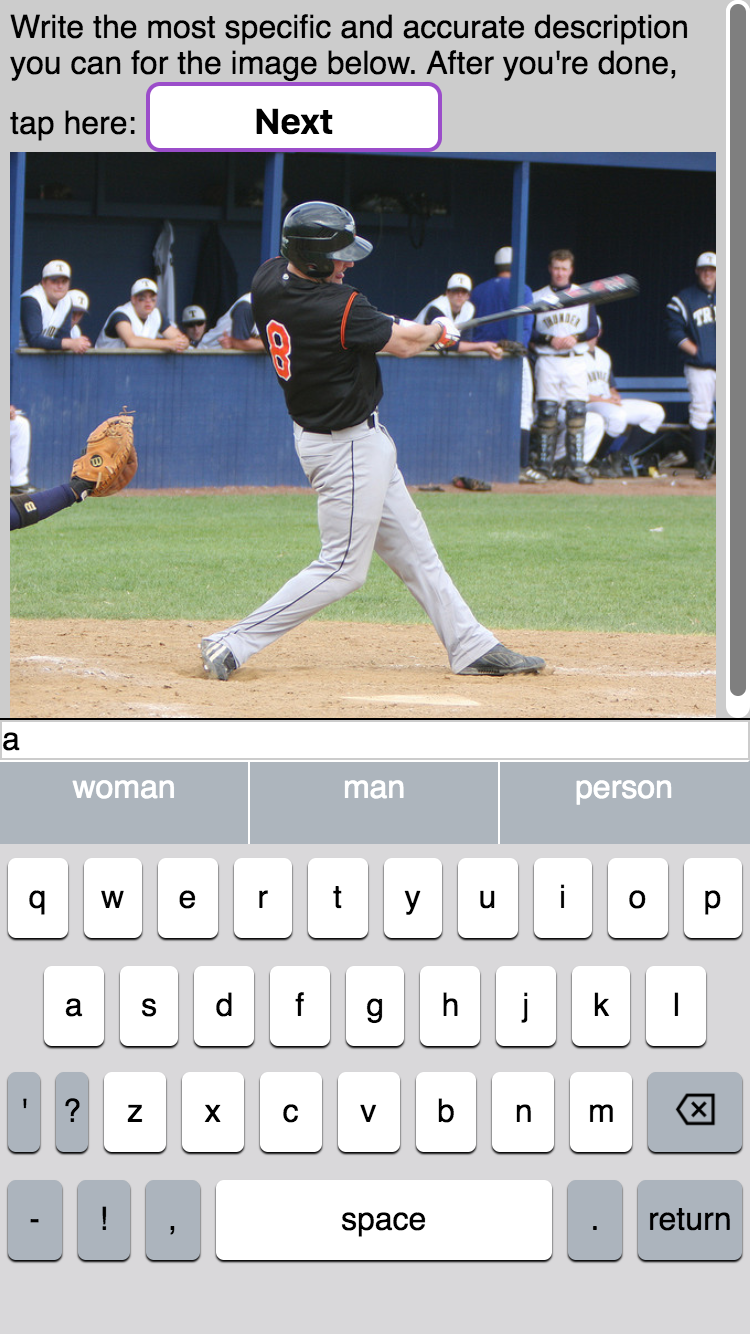

Impact of Predictive Text on the Content of What People Write

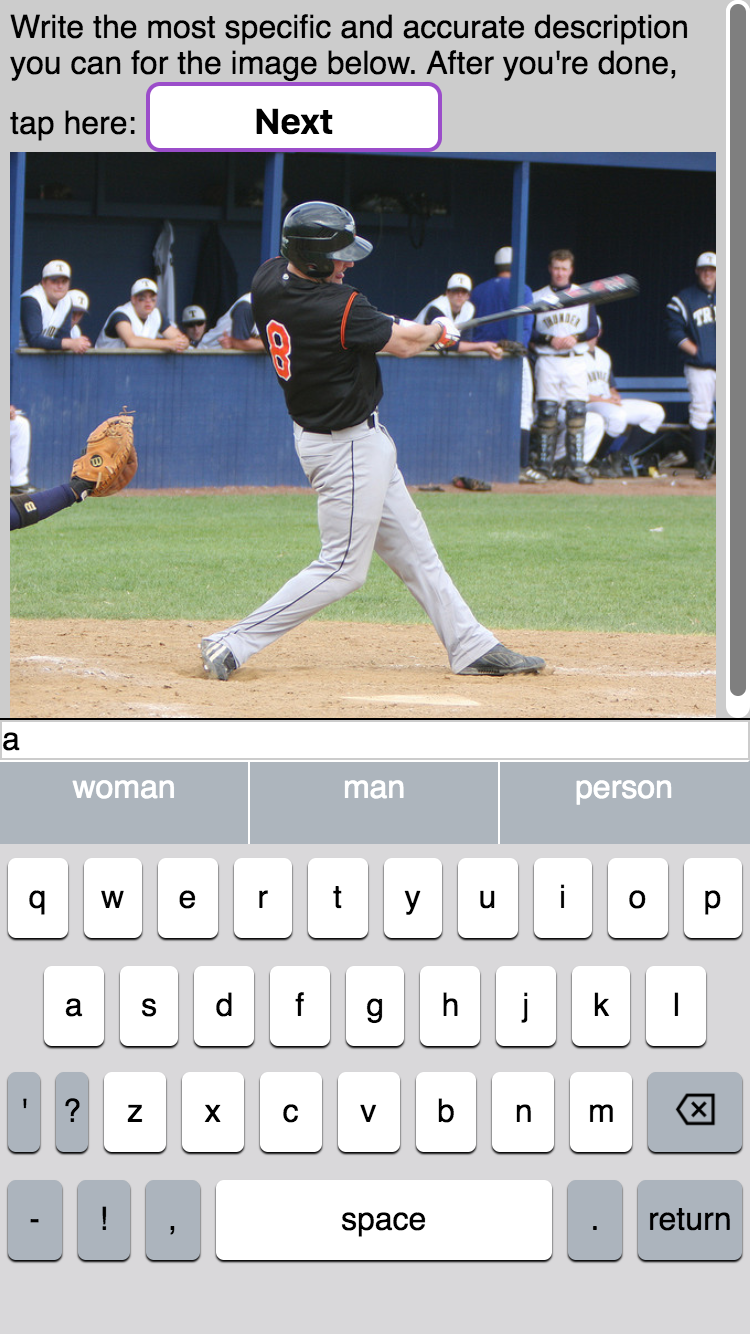

Predictive text technology (e.g., the word suggestions displayed on most keyboards on mobile devices) was designed to improve the ease and efficiency of text entry. Our work demonstrates that predictive text can influence the content of what people write.

Predictive text technology (e.g., the word suggestions displayed on most keyboards on mobile devices) was designed to improve the ease and efficiency of text entry. Our work demonstrates that predictive text can influence the content of what people write.

Kenneth C. Arnold, Krysta Chauncey, and Krzysztof Z. Gajos. Predictive Text Encourages Predictable Writing. In Proceedings of the 25th International Conference on Intelligent User Interfaces. ACM, 2020. To appear.

[Abstract, BibTeX, etc.]

Kenneth Arnold, Krysta Chauncey, and Krzysztof Gajos. Sentiment Bias in Predictive Text Recommendations Results in Biased Writing. In Proceedings of Graphics Interface 2018, GI 2018, pages 33 – 40. Canadian Human-Computer Communications Society / Societe canadienne du dialogue humain-machine, 2018.

[Abstract, BibTeX, etc.]

Kenneth C. Arnold, Krzysztof Z. Gajos, and Adam T. Kalai. On Suggesting Phrases vs. Predicting Words for Mobile Text Composition. In Proceedings of the 29th Annual Symposium on User Interface Software and Technology, UIST '16, pages 603–608, New York, NY, USA, 2016. ACM.

[Abstract, BibTeX, etc.]

Exploring Individual Differences in How People Use Adaptive User Interfaces

Past work, including ours, has shown that well-designed adaptive user interfaces can substantially improve people's performance and that people prefer those interfaces to the standard one-size-fits-all designs. But do all people benefit from adaptive user interfaces equally, or are some systematic differences causing some people to reap greater benefit than others. Our first study, which utilized the results from the Multitasking Test on Lab in the Wild has shown that people with high need for cognition utilize the adaptive feature fo adaptive user interfaces much more than those with low need for cognition. Also, introverts utilize the adaptive interface more than extroverts.

Past work, including ours, has shown that well-designed adaptive user interfaces can substantially improve people's performance and that people prefer those interfaces to the standard one-size-fits-all designs. But do all people benefit from adaptive user interfaces equally, or are some systematic differences causing some people to reap greater benefit than others. Our first study, which utilized the results from the Multitasking Test on Lab in the Wild has shown that people with high need for cognition utilize the adaptive feature fo adaptive user interfaces much more than those with low need for cognition. Also, introverts utilize the adaptive interface more than extroverts.

Krzysztof Z. Gajos and Krysta Chauncey. The Influence of Personality Traits and Cognitive Load on the Use of Adaptive User Interfaces. In Proceedings of the 22nd International Conference on Intelligent User Interfaces, IUI '17, pages 301–306, New York, NY, USA, 2017. ACM.

[Abstract, BibTeX, Blog post, Data, etc.]

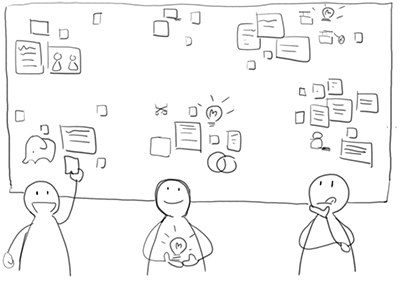

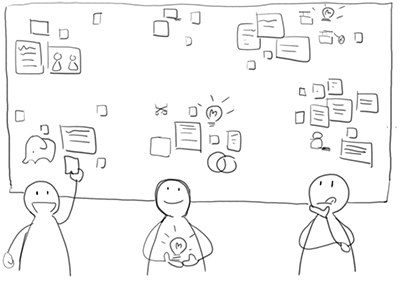

Supporting Effective Collective Ideation at Scale

Various online platforms for different domains--ranging from social development to product design--have emerged as a space where people can share their ideas and get inspired by ideas from other people all over the world. The promise of these platforms is that the mix of perspectives and expertise among the participants should allow creative solutions to emerge in ways unimaginable in the lone-innovator or small-group settings. In practice, however, existing online innovation platforms accumulate large numbers of mundane and repetitive ideas rarely leading to valuable breakthroughs.

Various online platforms for different domains--ranging from social development to product design--have emerged as a space where people can share their ideas and get inspired by ideas from other people all over the world. The promise of these platforms is that the mix of perspectives and expertise among the participants should allow creative solutions to emerge in ways unimaginable in the lone-innovator or small-group settings. In practice, however, existing online innovation platforms accumulate large numbers of mundane and repetitive ideas rarely leading to valuable breakthroughs.

We have developed IdeaHound, an online platform that helps large groups of people generate diverse ideas together. IdeaHound is enabled by a crowd- and machine learning-based technique to generate a computational representation of the solution space, called an idea map, that encodes semantic relationships between ideas. The results of a subsequent study show that by presenting an automatically sampled set of creative and diverse example ideas from the idea map, IdeaHound can improve the diversity and creativity of ideas generated by a participants compared to presenting a set of randomly selected examples. A subsequent study shed light on the best timing for delivery of inspirational examples.

Joel Chan, Pao Siangliulue, Denisa Qori McDonald, Ruixue Liu, Reza Moradinezhad, Safa Aman, Erin T. Solovey, Krzysztof Z. Gajos, and Steven P. Dow. Semantically Far Inspirations Considered Harmful?: Accounting for Cognitive States in Collaborative Ideation. In Proceedings of the 2017 ACM SIGCHI Conference on Creativity and Cognition, C&C '17, pages 93–105, New York, NY, USA, 2017. ACM.

[Abstract, BibTeX, Slides, etc.]

Pao Siangliulue, Joel Chan, Steven P. Dow, and Krzysztof Z. Gajos. IdeaHound: Improving Large-scale Collaborative Ideation with Crowd-Powered Real-time Semantic Modeling. In Proceedings of the 29th Annual Symposium on User Interface Software and Technology, UIST '16, pages 609-624, New York, NY, USA, 2016. ACM.

[Abstract, BibTeX, etc.]

Pao Siangliulue, Joel Chan, Krzysztof Z. Gajos, and Steven P. Dow. Providing Timely Examples Improves the Quantity and Quality of Generated Ideas. In Proceedings of the 2015 ACM SIGCHI Conference on Creativity and Cognition, C&C '15, pages 83-92, New York, NY, USA, 2015. ACM.

[Abstract, BibTeX, etc.]

Pao Siangliulue, Kenneth C. Arnold, Krzysztof Z. Gajos, and Steven P. Dow. Toward Collaborative Ideation at Scale: Leveraging Ideas from Others to Generate More Creative and Diverse Ideas. In Proceedings of the 18th ACM Conference on Computer Supported Cooperative Work & Social Computing, CSCW '15, pages 937-945, New York, NY, USA, 2015. ACM.

[Abstract, BibTeX, etc.]

TELLab: Experimentation @ Scale to Support Experiential Learning in Social Sciences and Design

Well-conducted lecture demonstrations of natural phenomena improve students' engagement, learning and retention of knowledge. Similarly, laboratory modules that allow for genuine exploration and discovery of relevant concepts can improve learning outcomes. These pedagogical techniques are used frequently in natural sciences and engineering to teach students about phenomena in the physical world. But how might we conduct a lecture demonstration to demonstrate impact of extraneous cognitive load on performance? How might we design a lab, in which students explore how adding decorations to visualizations impacts the comprehension and memorability of visualizations?

We are developing tools, content and procedures to bring experiential learning techniques to social science and design-related courses that teach concepts related to human perception, cognition and behavior. Specifically, we are working to develop software technologies to enable rapid, large-scale and ethical online human-subjects experimentation in undergraduate design-related courses.

See the project web site for more.

Well-conducted lecture demonstrations of natural phenomena improve students' engagement, learning and retention of knowledge. Similarly, laboratory modules that allow for genuine exploration and discovery of relevant concepts can improve learning outcomes. These pedagogical techniques are used frequently in natural sciences and engineering to teach students about phenomena in the physical world. But how might we conduct a lecture demonstration to demonstrate impact of extraneous cognitive load on performance? How might we design a lab, in which students explore how adding decorations to visualizations impacts the comprehension and memorability of visualizations?

We are developing tools, content and procedures to bring experiential learning techniques to social science and design-related courses that teach concepts related to human perception, cognition and behavior. Specifically, we are working to develop software technologies to enable rapid, large-scale and ethical online human-subjects experimentation in undergraduate design-related courses.

See the project web site for more.

Na Li, Krzysztof Z. Gajos, Ken Nakayama, and Ryan Enos. TELLab: An Experiential Learning Tool for Psychology. In Proceedings of the Second (2015) ACM Conference on Learning @ Scale, L@S '15, pages 293–297, New York, NY, USA, 2015. ACM.

[Abstract, BibTeX, etc.]

Model of Technology Acceptance by Older Adults

Mobile technologies have the potential to improve the quality of life of older adults by supporting self-management of cronic care, providing ways to maintain social bonds with family and community, by facilitating access to community resources, and through a myriad of other ways. Older adults, however, are less likely to adopt mobile technologies than younger adults. Why? So far, we have found one key factor not accounted for by other technology acceptance models. See the paper for more:

Sunyoung Kim, Krzysztof Z. Gajos, Michael Muller, and Barbara J. Grosz. Acceptance of Mobile Technology by Older Adults: A Preliminary Study. In Proceedings of the 18th International Conference on Human-Computer Interaction with Mobile Devices and Services, MobileHCI '16, pages 147-157, New York, NY, USA, 2016. ACM.

[Abstract, BibTeX, etc.]

Understanding Crowdsourcing as a Tool for Research

Many excellent crowdsourcing and citizen science tools exist to support research, but only a tiny fraction of researchers make use of them. Why? Our interviews with 18 researchers across disciplines revealed a number of differences between the assumptions underlying existing crowd- and citizen-powered platforms and the prevalent research practices and norms.

Many excellent crowdsourcing and citizen science tools exist to support research, but only a tiny fraction of researchers make use of them. Why? Our interviews with 18 researchers across disciplines revealed a number of differences between the assumptions underlying existing crowd- and citizen-powered platforms and the prevalent research practices and norms.

Edith Law, Krzysztof Z. Gajos, Andrea Wiggins, Mary L. Gray, and Alex Williams. Crowdsourcing As a Tool for Research: Implications of Uncertainty. In Proceedings of the 2017 ACM Conference on Computer Supported Cooperative Work and Social Computing, CSCW '17, pages 1544–1561, New York, NY, USA, 2017. ACM.

[Abstract, BibTeX, Authorizer, etc.]

Curiosity

We built on the information gap theory of curiosity to develop several interventions to motivate crowdworkers to persist longer on a task. Our experiment results show that curiosity interventions improve worker retention without degrading performance, and the magnitude of the effects are influenced by both the personal characteristics of the worker and the nature of the task.

We built on the information gap theory of curiosity to develop several interventions to motivate crowdworkers to persist longer on a task. Our experiment results show that curiosity interventions improve worker retention without degrading performance, and the magnitude of the effects are influenced by both the personal characteristics of the worker and the nature of the task.

Edith Law, Ming Yin, Joslin Goh, Kevin Chen, Michael A. Terry, and Krzysztof Z. Gajos. Curiosity Killed the Cat, but Makes Crowdwork Better. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, CHI '16, pages 4098-4110, New York, NY, USA, 2016. ACM.

Honorable Mention

[Abstract, BibTeX, etc.]

Learnersourcing: Leveraging Crowds of Learners to Improve the Experience of Learning from Videos

Rich knowledge about the content of educational videos can be used to enable more effective and more enjoyable learning experiences. We are developing tools that leverage crowds of learners to collect rich meta data about educational videos as a byproduct of the learners' natural interactions with the videos. We are also developing tools and techniques that use these meta data to improve the learning experience for others.

Rich knowledge about the content of educational videos can be used to enable more effective and more enjoyable learning experiences. We are developing tools that leverage crowds of learners to collect rich meta data about educational videos as a byproduct of the learners' natural interactions with the videos. We are also developing tools and techniques that use these meta data to improve the learning experience for others.

Sarah Weir, Juho Kim, Krzysztof Z. Gajos, and Robert C. Miller. Learnersourcing Subgoal Labels for How-to Videos. In Proceedings of CSCW'15, 2015.

[Abstract, BibTeX, etc.]

Juho Kim, Philip J. Guo, Carrie J. Cai, Shang-Wen (Daniel) Li, Krzysztof Z. Gajos, and Robert C. Miller. Data-Driven Interaction Techniques for Improving Navigation of Educational Videos. In Proceedings of UIST'14, 2014. To appear.

[Abstract, BibTeX, Video, etc.]

Juho Kim, Phu Nguyen, Sarah Weir, Philip J Guo, Robert C Miller, and Krzysztof Z. Gajos. Crowdsourcing Step-by-Step Information Extraction to Enhance Existing How-to Videos. In Proceedings of CHI 2014, 2014. To appear.

Honorable Mention

[Abstract, BibTeX, etc.]

Juho Kim, Shang-Wen (Daniel) Li, Carrie J. Cai, Krzysztof Z. Gajos, and Robert C. Miller. Leveraging Video Interaction Data and Content Analysis to Improve Video Learning. In Proceedings of the CHI 2014 Learning Innovation at Scale workshop, 2014.

[Abstract, BibTeX, etc.]

Juho Kim, Philip J. Guo, Daniel T. Seaton, Piotr Mitros, Krzysztof Z. Gajos, and Robert C. Miller. Understanding In-Video Dropouts and Interaction Peaks in Online Lecture Videos. In Proceeding of Learning at Scale 2014, 2014. To appear.

[Abstract, BibTeX, etc.]

Juho Kim, Robert C. Miller, and Krzysztof Z. Gajos. Learnersourcing subgoal labeling to support learning from how-to videos. In CHI '13 Extended Abstracts on Human Factors in Computing Systems, CHI EA '13, pages 685-690, New York, NY, USA, 2013. ACM.

[Abstract, BibTeX, etc.]

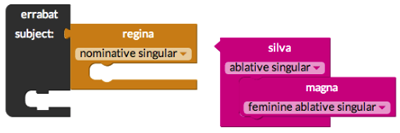

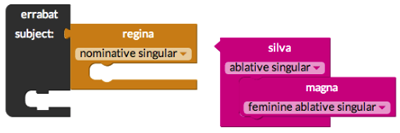

Ingenium: Improving Engagement and Accuracy with the Visualization of Latin for Language Learning

Learners commonly make errors in reading Latin, because they do not fully understand the impact of Latin's grammatical structure--its morphology and syntax--on a sentence's meaning. Synthesizing instructional methods used for Latin and artificial programming languages, Ingenium visualizes the logical structure of grammar by making each word into a puzzle block, whose shape and color reflect the word's morphological forms and roles. See the video to see how it works.

Learners commonly make errors in reading Latin, because they do not fully understand the impact of Latin's grammatical structure--its morphology and syntax--on a sentence's meaning. Synthesizing instructional methods used for Latin and artificial programming languages, Ingenium visualizes the logical structure of grammar by making each word into a puzzle block, whose shape and color reflect the word's morphological forms and roles. See the video to see how it works.

Sharon Zhou, Ivy J. Livingston, Mark Schiefsky, Stuart M. Shieber, and Krzysztof Z. Gajos. Ingenium: Engaging Novice Students with Latin Grammar. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, CHI '16, pages 944-956, New York, NY, USA, 2016. ACM.

[Abstract, BibTeX, Video, etc.]

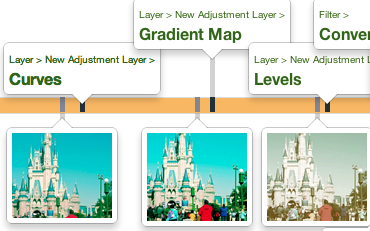

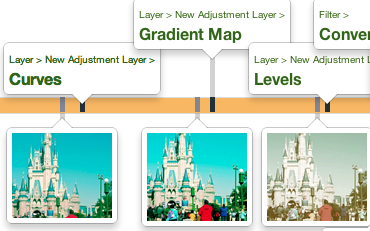

Predicting Users' First Impressions of Website Aesthetics

Users make lasting judgments about a website's appeal within a split second of seeing it for the first time. This first impression is influential enough to later affect their opinion of a site's usability and trustworthiness. In this project, we aim to automatically adapt website aesthetics to users' various preferences in order to improve this first impression. As a first step, we are working on predicting what people find appealing, and how this is influenced by their demographic backgrounds.

Users make lasting judgments about a website's appeal within a split second of seeing it for the first time. This first impression is influential enough to later affect their opinion of a site's usability and trustworthiness. In this project, we aim to automatically adapt website aesthetics to users' various preferences in order to improve this first impression. As a first step, we are working on predicting what people find appealing, and how this is influenced by their demographic backgrounds.

Katharina Reinecke and Krzysztof Z. Gajos. Quantifying Visual Preferences Around the World. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '14, pages 11-20, New York, NY, USA, 2014. ACM.

[Abstract, BibTeX, etc.]

Katharina Reinecke, Tom Yeh, Luke Miratrix, Rahmatri Mardiko, Yuechen Zhao, Jenny Liu, and Krzysztof Z. Gajos. Predicting users' first impressions of website aesthetics with a quantification of perceived visual complexity and colorfulness. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '13, pages 2049-2058, New York, NY, USA, 2013. ACM.

Honorable Mention

[Abstract, BibTeX, Data, etc.]

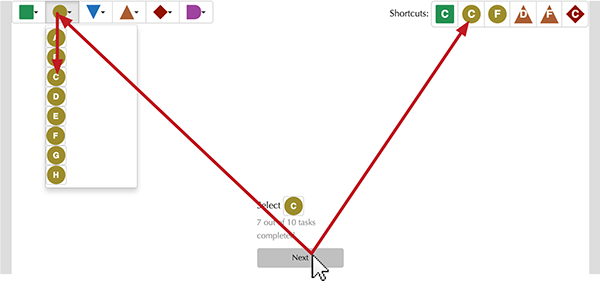

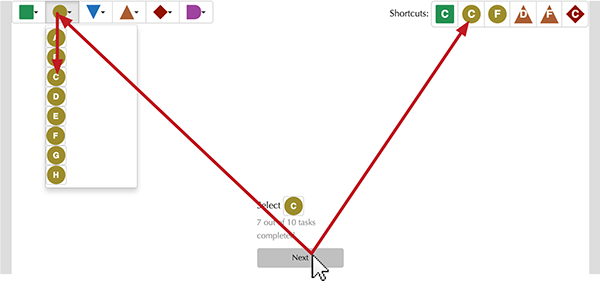

Adaptive Click and Cross: Adapting to Both Abilities and Task to Improve Performance of Users With Impaired Dexterity

Adaptive Click-and-Cross, an interaction technique for computer users

with impaired dexterity. This technique combines three "adaptive"

approaches that have appeared separately in previous literature:

adapting the user's abilities to the interface (i.e., by modifying the

way that the cursor works), adapting the user interface to the user's

abilities (i.e., by modifying the user interface through enlarging

items), and adapting the user interface to the user's task (i.e., by

moving frequently or recently used items to a convenient location).

Adaptive Click-and-Cross combines these three adaptations to minimize

each approach's shortcomings, selectively enlarging items predicted to

be useful to the user while employing a modified cursor to enable

access to smaller items.

Adaptive Click-and-Cross, an interaction technique for computer users

with impaired dexterity. This technique combines three "adaptive"

approaches that have appeared separately in previous literature:

adapting the user's abilities to the interface (i.e., by modifying the

way that the cursor works), adapting the user interface to the user's

abilities (i.e., by modifying the user interface through enlarging

items), and adapting the user interface to the user's task (i.e., by

moving frequently or recently used items to a convenient location).

Adaptive Click-and-Cross combines these three adaptations to minimize

each approach's shortcomings, selectively enlarging items predicted to

be useful to the user while employing a modified cursor to enable

access to smaller items.

Louis Li and Krzysztof Z. Gajos. Adaptive Click-and-cross: Adapting to Both Abilities and Task Improves Performance of Users with Impaired Dexterity. In Proceedings of the 19th International Conference on Intelligent User Interfaces, IUI '14, pages 299–304, New York, NY, USA, 2014. ACM.

[Abstract, BibTeX, etc.]

Louis Li. Adaptive Click-and-cross: An Interaction Technique for Users with Impaired Dexterity. In Proceedings of the 15th International ACM SIGACCESS Conference on Computers and Accessibility, ASSETS '13, pages 79:1-79:2, New York, NY, USA, 2013. ACM.

[Abstract, BibTeX, etc.]

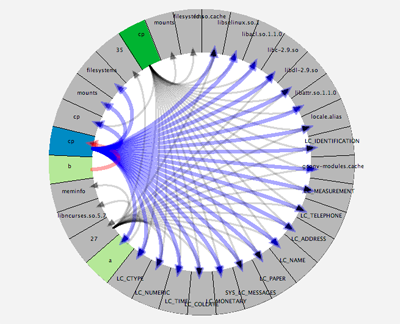

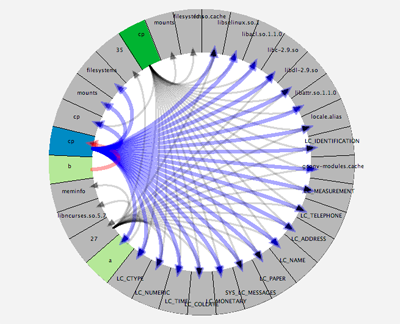

InProv: a Filesystem Provenance Visualization Tool

InProv is a filesystem provenance visualization tool, which displays provenance data with an interactive radial-based tree layout. The tool also utilizes a new time-based hierarchical node grouping method for filesystem provenance data we developed to match the user's mental model and make data exploration more intuitive. In an experiment comparing InProv to a visualization based on the node-link representation, participants using InProv made more accurate assessments of provenance and found InProv to require less mental effort, less physical activity, less work, and to be less stressful to use.

InProv is a filesystem provenance visualization tool, which displays provenance data with an interactive radial-based tree layout. The tool also utilizes a new time-based hierarchical node grouping method for filesystem provenance data we developed to match the user's mental model and make data exploration more intuitive. In an experiment comparing InProv to a visualization based on the node-link representation, participants using InProv made more accurate assessments of provenance and found InProv to require less mental effort, less physical activity, less work, and to be less stressful to use.

Michelle A Borkin, Chelsea S Yeh, Madelaine Boyd, Peter Macko, KZ Gajos, M Seltzer, and H Pfister. Evaluation of filesystem provenance visualization tools. IEEE transactions on visualization and computer graphics, 19(12):2476-2485, 2013.

[Abstract, BibTeX, etc.]

Organic Peer Assessment

We are developing tools and techniques for organic peer assessment, an approach where assessment occurs as a side effect of students performing activities, which they find intrinsically motivating. Our preliminary results, obtained in the context of a flipped classroom, show that the quality of the summative assessment produced by the peers matched that of experts, and we encountered strong evidence that our peer assessment implementation had positive effects on achievement.

We are developing tools and techniques for organic peer assessment, an approach where assessment occurs as a side effect of students performing activities, which they find intrinsically motivating. Our preliminary results, obtained in the context of a flipped classroom, show that the quality of the summative assessment produced by the peers matched that of experts, and we encountered strong evidence that our peer assessment implementation had positive effects on achievement.

Curio: a platform for crowdsourcing research tasks in sciences and humanities

Curio is intended to be a platform for crowdsourcing research tasks in sciences and humanities. The platform is designed to allow researchers to create and launch a new crowdsourcing project within minutes, monitor and control aspects of the crowdsourcing process with minimal effort. With Curio, we are exploring a brand new model of citizen science that significantly lowers the barrier of entry for scientists, developing new interfaces and algorithms for supporting mixed-expertise crowdsourcing, and investigating a variety of human computation questions related to task decomposition, incentive design and quality control.

Curio is intended to be a platform for crowdsourcing research tasks in sciences and humanities. The platform is designed to allow researchers to create and launch a new crowdsourcing project within minutes, monitor and control aspects of the crowdsourcing process with minimal effort. With Curio, we are exploring a brand new model of citizen science that significantly lowers the barrier of entry for scientists, developing new interfaces and algorithms for supporting mixed-expertise crowdsourcing, and investigating a variety of human computation questions related to task decomposition, incentive design and quality control.

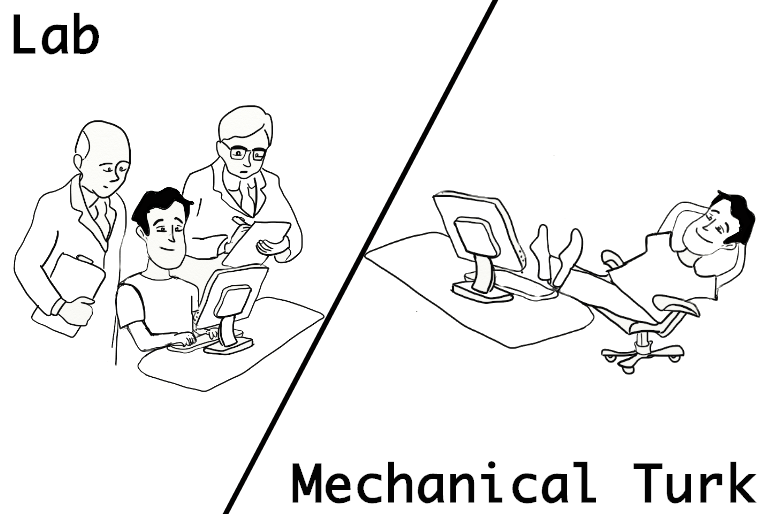

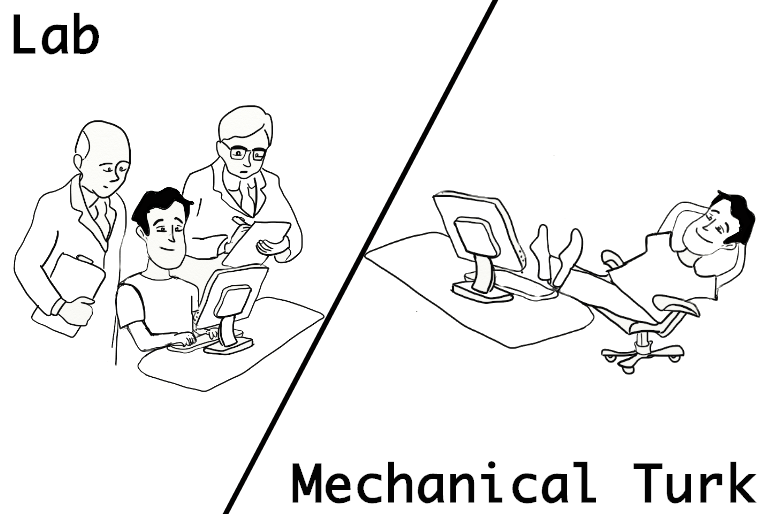

Crowdsourcing Performance Evaluations of User Interfaces

Can computer users be trusted to paricipate in user interface studies from the comfort of their home?

Can user interface researchers give up control over their subjects' environment? In this project we study whether we can use Amazon Mechanical Turk to conduct user interface studies reliably.

To do so, we replicated three previously known performance experiments, the "Bubble Cursor," the "Split Menus," and the "Split Interface," both in our lab and on Mechanical Turk.

We compared the lab with the online population in terms of performance metrics such as speed, accuracy, and consistency.

The results show that the Mechanical Turk participants perform just as well as the lab participants.

Can computer users be trusted to paricipate in user interface studies from the comfort of their home?

Can user interface researchers give up control over their subjects' environment? In this project we study whether we can use Amazon Mechanical Turk to conduct user interface studies reliably.

To do so, we replicated three previously known performance experiments, the "Bubble Cursor," the "Split Menus," and the "Split Interface," both in our lab and on Mechanical Turk.

We compared the lab with the online population in terms of performance metrics such as speed, accuracy, and consistency.

The results show that the Mechanical Turk participants perform just as well as the lab participants.

Steven Komarov, Katharina Reinecke, and Krzysztof Z. Gajos. Crowdsourcing performance evaluations of user interfaces. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '13, pages 207-216, New York, NY, USA, 2013. ACM.

[Abstract, BibTeX, Data, etc.]

SPRWeb: Preserving Subjective Responses to Website Colour Schemes through Automatic Recolouring

Colors are an important part of user experiences on the Web. Color schemes influence the aesthetics, first impressions and long-term engagement with websites. However, five percent of people perceive a subset of all colors because they have color vision deficiency (CVD), resulting in an unequal and less-rich user experience on the Web. Traditionally, people with CVD have been supported by recoloring tools that improve color differentiability, but do not consider the subjective properties of color schemes while recoloring. To address this, we developed SPRWeb, a tool that recolors websites to preserve subjective responses and improve color differentiability, thus enabling users with CVD to have similar online experiences. SPRWeb is the first tool to automatically preserve the subjective and perceptual properties of website color schemes thereby equalizing the color-based web experience for people with CVD.

Colors are an important part of user experiences on the Web. Color schemes influence the aesthetics, first impressions and long-term engagement with websites. However, five percent of people perceive a subset of all colors because they have color vision deficiency (CVD), resulting in an unequal and less-rich user experience on the Web. Traditionally, people with CVD have been supported by recoloring tools that improve color differentiability, but do not consider the subjective properties of color schemes while recoloring. To address this, we developed SPRWeb, a tool that recolors websites to preserve subjective responses and improve color differentiability, thus enabling users with CVD to have similar online experiences. SPRWeb is the first tool to automatically preserve the subjective and perceptual properties of website color schemes thereby equalizing the color-based web experience for people with CVD.

David R. Flatla, Katharina Reinecke, Carl Gutwin, and Krzysztof Z. Gajos. SPRWeb: preserving subjective responses to website colour schemes through automatic recolouring. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '13, pages 2069-2078, New York, NY, USA, 2013. ACM.

Best Paper Award

[Abstract, BibTeX, etc.]

Cultural Differences in Time Perception and Group Decision-Making

When discussing the effect of technology on culture, people often assume that the world is slowly homogenizing into a culture of Internet users, who share similar values and behavioral norms. Our analysis of the online scheduling behavior on Doodle argues against this hypothesis. In fact, event scheduling is not simply a matter of finding a mutually agreeable time, but a process that is shaped by social norms and values. And this can highly vary between countries. To investigate the influence of national culture on people's scheduling behavior we analyzed more than 1.5 million Doodle date/time polls from 211 countries. Our findings include that people around the world steer their availabilities towards those options that have good chances to reach consensus. But people from more group-oriented collectivist countries (e.g., India, China) seem to make a larger effort to reach mutual agreement than individualists (e.g., the US). We believe that increasing the awareness of such differences can help improve intercultural scheduling and support the acceptance of cultural differences as an interesting contribution to our lives.

When discussing the effect of technology on culture, people often assume that the world is slowly homogenizing into a culture of Internet users, who share similar values and behavioral norms. Our analysis of the online scheduling behavior on Doodle argues against this hypothesis. In fact, event scheduling is not simply a matter of finding a mutually agreeable time, but a process that is shaped by social norms and values. And this can highly vary between countries. To investigate the influence of national culture on people's scheduling behavior we analyzed more than 1.5 million Doodle date/time polls from 211 countries. Our findings include that people around the world steer their availabilities towards those options that have good chances to reach consensus. But people from more group-oriented collectivist countries (e.g., India, China) seem to make a larger effort to reach mutual agreement than individualists (e.g., the US). We believe that increasing the awareness of such differences can help improve intercultural scheduling and support the acceptance of cultural differences as an interesting contribution to our lives.

Katharina Reinecke, Minh Khoa Nguyen, Abraham Bernstein, Michael Näf, and Krzysztof Z. Gajos. Doodle around the world: online scheduling behavior reflects cultural differences in time perception and group decision-making. In Proceedings of the 2013 conference on Computer supported cooperative work, CSCW '13, pages 45-54, New York, NY, USA, 2013. ACM.

[Abstract, BibTeX, Data, etc.]

Exploring The Design Space Of Adaptive User Interfaces

For decades, researchers have presented different adaptive user

interfaces and discussed the pros and cons of adaptation on task

performance and satisfaction. Little research, however, has been

directed at isolating and understanding those aspects of adaptive

interfaces which make some of them successful and others not. We have

conducted several laboratory studies to systematically isolate some of

the design and contextual factors that affect the impact of adaptation

on users' performance and satisfaction. The results of these studies combined with the recent work of others, provide an initial characterization of the design space of adaptive graphical user interfaces.

For decades, researchers have presented different adaptive user

interfaces and discussed the pros and cons of adaptation on task

performance and satisfaction. Little research, however, has been

directed at isolating and understanding those aspects of adaptive

interfaces which make some of them successful and others not. We have

conducted several laboratory studies to systematically isolate some of

the design and contextual factors that affect the impact of adaptation

on users' performance and satisfaction. The results of these studies combined with the recent work of others, provide an initial characterization of the design space of adaptive graphical user interfaces.

Accurate Measurements of Pointing Performance from In Situ Observations

We present a method for obtaining lab-quality measurements of pointing performance from unobtrusive observations of natural in situ interactions. Specifically, we have developed a set of user-independent classifiers for discriminating between deliberate, targeted mouse pointer movements and those movements that were affected by any extraneous factors. Our results show that, on four distinct metrics, the data collected in-situ and filtered with our classifiers closely matches the results obtained from the formal experiment.

Krzysztof Gajos, Katharina Reinecke, and Charles Herrmann. Accurate measurements of pointing performance from in situ observations. In Proceedings of the 2012 ACM annual conference on Human Factors in Computing Systems, CHI '12, pages 3157-3166, New York, NY, USA, 2012. ACM.

[Abstract, BibTeX, Authorizer, Data and Source Code, etc.]

Mobi: Human Computation Tasks with Global Constraints

An important class of tasks that are underexplored in current human computation systems are complex tasks with global constraints. One example of such a task is itinerary planning, where solutions consist of a sequence of activities that meet requirements specified by the requester. In this paper, we focus on the crowdsourcing of such plans as a case study of constraint-based human computation tasks and introduce a collaborative planning system called Mobi that illustrates a novel crowdware paradigm. Mobi presents a single interface that enables crowd participants to view the current solution context and make appropriate contributions based on current needs. We conduct experiments that explain how Mobi enables a crowd to effectively and collaboratively resolve global constraints, and discuss how the design princi- ples behind Mobi can more generally facilitate a crowd to tackle problems involving global constraints.

Haoqi Zhang, Edith Law, Rob Miller, Krzysztof Gajos, David Parkes, and Eric Horvitz. Human computation tasks with global constraints. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI '12, pages 217-226, New York, NY, USA, 2012. ACM.

Honorable Mention

[Abstract, BibTeX, etc.]

PlateMate: Crowdsourcing Nutrition Analysis from Food Photographs

PlateMate allows users to

take photos of their meals and receive estimates of food intake and

composition. Accurate awareness of this information is considered a

prerequisite to successful change of eating habits, but current

methods for food logging via self-reporting, expert observation, or

algorithmic analysis are time-consuming, expensive, or inaccurate.

PlateMate crowdsources nutritional analysis from photographs using

Amazon Mechanical Turk, automatically coordinating untrained workers

to estimate a meal's calories, fat, carbohydrates, and protein. To

make PlateMate possible, we developed the Management framework for

crowdsourcing complex tasks, which supports PlateMate's decomposition

of the nutrition analysis workflow. Two evaluations show that the

PlateMate system is nearly as accurate as a trained dietitian and

easier to use for most users than traditional self-reporting, while

remaining robust for general use across a wide variety of meal types.

PlateMate allows users to

take photos of their meals and receive estimates of food intake and

composition. Accurate awareness of this information is considered a

prerequisite to successful change of eating habits, but current

methods for food logging via self-reporting, expert observation, or

algorithmic analysis are time-consuming, expensive, or inaccurate.

PlateMate crowdsources nutritional analysis from photographs using

Amazon Mechanical Turk, automatically coordinating untrained workers

to estimate a meal's calories, fat, carbohydrates, and protein. To

make PlateMate possible, we developed the Management framework for

crowdsourcing complex tasks, which supports PlateMate's decomposition

of the nutrition analysis workflow. Two evaluations show that the

PlateMate system is nearly as accurate as a trained dietitian and

easier to use for most users than traditional self-reporting, while

remaining robust for general use across a wide variety of meal types.

Jon Noronha, Eric Hysen, Haoqi Zhang, and Krzysztof Z. Gajos. PlateMate: Crowdsourcing Nutrition Analysis from Food Photographs. In Proceedings of the 24th annual ACM symposium on User interface software and technology, UIST '11, pages 1-12, New York, NY, USA, 2011. ACM.

[Abstract, BibTeX, Authorizer, Data, etc.]

PETALS Project -- A Visual Decision Support Tool For Landmine Detection

Landmines remain in conflict areas for decades after the end of hostilities. Their suspected presence renders vast tracts of land unusable for development and agriculture causing significant psychological and economical damage. Landmine removal is a slow and dangerous process. Compounding the difficulty, modern landmines use minimal amounts of metallic content making them very hard to detect and to distinguish from other metallic debris (such as bullet shells, wires, etc.) frequently present in post-combat areas.

Recent research has demonstrated that the accuracy of landmine detection can be improved if deminers try to mentally represent the shape of the area where the metal detector's response gets triggered. Despite similar amounts of metallic content, mines and clutter results in areas of different shapes. Building on these findings, we have created a visual decision support tool that presents the deminer with an explicit visualization of the shapes of these response areas. The results of our study demonstrate that this tool significantly improves novice deminers' detection rates and it improves the localization accuracy.

Lahiru Jayatilaka, David M. Sengeh, Charles Herrmann, Luca Bertuccelli, Dimitrios Antos, Barbara J. Grosz, and Krzysztof Z. Gajos. PETALS: Improving Learning of Expert Skill in Humanitarian Demining. In Proceedings of the 1st ACM SIGCAS Conference on Computing and Sustainable Societies, COMPASS '18, pages 33:1–33:11, New York, NY, USA, 2018. ACM. Best Paper Award

[Abstract, BibTeX, Slides, etc.]

Lahiru G. Jayatilaka, Luca F. Bertuccelli, James Staszewski, and Krzysztof Z. Gajos. Evaluating a Pattern-Based Visual Support Approach for Humanitarian Landmine Clearance. In CHI '11: Proceeding of the annual SIGCHI conference on Human factors in computing systems, New York, NY, USA, 2011. ACM.

[Abstract, BibTeX, Authorizer, etc.]

Lahiru G. Jayatilaka, Luca F. Bertuccelli, James Staszewski, and Krzysztof Z. Gajos. PETALS: a visual interface for landmine detection. In Adjunct proceedings of the 23nd annual ACM symposium on User interface software and technology, UIST '10, pages 427-428, New York, NY, USA, 2010. ACM.

[Abstract, BibTeX, Authorizer, etc.]

HemoVis: Artery Visualization for Heart Disease Diagnosis

Heart disease is the number one killer in the United States, and finding indicators of the disease at an early stage is critical for treatment and prevention. In this paper we evaluate visualization techniques that enable the diagnosis of coronary artery disease. A key physical quantity of medical interest is endothelial shear stress (ESS). Low ESS has been associated with sites of lesion formation and rapid progression of disease in the coronary arteries. Having effective visualizations of a patient's ESS data is vital for the quick and thorough non-invasive evaluation by a cardiologist. We present a task taxonomy for hemodynamics based on a formative user study with domain experts. Based on the results of this study we developed HemoVis, an interactive visualization application for heart disease diagnosis that uses a novel 2D tree diagram representation of coronary artery trees. We present the results of a formal quantitative user study with domain experts that evaluates the effect of 2D versus 3D artery representations and of color maps on identifying regions of low ESS. We show statistically significant results demonstrating that our 2D visualizations are more accurate and efficient than 3D representations, and that a perceptually appropriate color map leads to fewer diagnostic mistakes than a rainbow color map.

Heart disease is the number one killer in the United States, and finding indicators of the disease at an early stage is critical for treatment and prevention. In this paper we evaluate visualization techniques that enable the diagnosis of coronary artery disease. A key physical quantity of medical interest is endothelial shear stress (ESS). Low ESS has been associated with sites of lesion formation and rapid progression of disease in the coronary arteries. Having effective visualizations of a patient's ESS data is vital for the quick and thorough non-invasive evaluation by a cardiologist. We present a task taxonomy for hemodynamics based on a formative user study with domain experts. Based on the results of this study we developed HemoVis, an interactive visualization application for heart disease diagnosis that uses a novel 2D tree diagram representation of coronary artery trees. We present the results of a formal quantitative user study with domain experts that evaluates the effect of 2D versus 3D artery representations and of color maps on identifying regions of low ESS. We show statistically significant results demonstrating that our 2D visualizations are more accurate and efficient than 3D representations, and that a perceptually appropriate color map leads to fewer diagnostic mistakes than a rainbow color map.

Michelle A. Borkin, Krzysztof Z. Gajos, Amanda Peters, Dimitrios Mitsouras, Simone Melchionna, Frank J. Rybicki, Charles L. Feldman, and Hanspeter Pfister. Evaluation of Artery Visualizations for Heart Disease Diagnosis. IEEE Transactions on Visualization and Computer Graphics (Proceedings of Information Visualization 2011), 17(12), December 2011.

[Abstract, BibTeX, etc.]

Automatic Task Design on Amazon Mechanical Turk

A central challenge in human computation is in understanding how to design task environments that effectively attract participants and coordinate the problem solving process. We consider a common problem that requesters face on Amazon Mechanical Turk: how should a task be designed so as to induce good output from workers? In posting a task, a requester decides how to break down the task into unit tasks, how much to pay for each unit task, and how many workers to assign to a unit task. These design decisions affect the rate at which workers complete unit tasks, as well as the quality of the work that results. Using image labeling as an example task, we consider the problem of designing the task to maximize the number of quality tags received within given time and budget constraints. We consider two different measures of work quality, and construct models for predicting the rate and quality of work based on observations of output to various designs. Preliminary results show that simple models can accurately predict the quality of output per unit task, but are less accurate in predicting the rate at which unit tasks complete. At a fixed rate of pay, our models generate different designs depending on the quality metric, and optimized designs obtain significantly more quality tags than baseline comparisons.

Eric Huang, Haoqi Zhang, David C. Parkes, Krzysztof Z. Gajos, and Yiling Chen. Toward automatic task design: a progress report. In Proceedings of the ACM SIGKDD Workshop on Human Computation, HCOMP '10, pages 77-85, New York, NY, USA, 2010. ACM.

[Abstract, BibTeX, Authorizer, etc.]

This page was last modified on April 26, 2022.

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.

Healthcare, clinical trials, and research related to neurological disease all require tools for accurately and objectively measuring motor impairments.

Our first tool, called Hevelius, measures motor impairment in the dominant arm based on a person's performance on a simple computer mouse-based task. We are working on other tools as well as on ways to make accurate measurements possible at home without help from clinician. Such at-home measurements can enable granular longitudinal measurements of disease progresson as well as large-scale assessments. This project is done in collaboration with the Laboratory for Deep Neurophenotyping at Massachusetts General Hospital.

Healthcare, clinical trials, and research related to neurological disease all require tools for accurately and objectively measuring motor impairments.

Our first tool, called Hevelius, measures motor impairment in the dominant arm based on a person's performance on a simple computer mouse-based task. We are working on other tools as well as on ways to make accurate measurements possible at home without help from clinician. Such at-home measurements can enable granular longitudinal measurements of disease progresson as well as large-scale assessments. This project is done in collaboration with the Laboratory for Deep Neurophenotyping at Massachusetts General Hospital.

Children with complex health conditions require care from a large, diverse team of caregivers that includes multiple types of medical professionals, parents and community support organizations. Coordination of their outpatient care, essential for good outcomes, presents major challenges. Our formative studies revealed that the nature of teamwork in complex care poses challenges to team coordination that extend beyond those identified in prior work and that can be handled by existing coordination systems. We are building on a computational theory of teamwork to create entirely new tools to support complex, loosely-coupled teamwork.

Children with complex health conditions require care from a large, diverse team of caregivers that includes multiple types of medical professionals, parents and community support organizations. Coordination of their outpatient care, essential for good outcomes, presents major challenges. Our formative studies revealed that the nature of teamwork in complex care poses challenges to team coordination that extend beyond those identified in prior work and that can be handled by existing coordination systems. We are building on a computational theory of teamwork to create entirely new tools to support complex, loosely-coupled teamwork.

Lab in the Wild is a platform for conducting large scale behavioral experiments with unpaid online volunteers. LabintheWild helps make empirical research in Human-Computer Interaction more reliable (by making it possible to recruit many more participants than would be possible in conventional laboratory studies) and more generalizable (by enabling access to very diverse groups of participants).

Lab in the Wild is a platform for conducting large scale behavioral experiments with unpaid online volunteers. LabintheWild helps make empirical research in Human-Computer Interaction more reliable (by making it possible to recruit many more participants than would be possible in conventional laboratory studies) and more generalizable (by enabling access to very diverse groups of participants).

Epidemiologic studies and public health biomonitoring rely on chemical exposure measurements in blood, urine, and other tissues, and in personal environments, such as homes. For many chemicals, the health implications of individual results are uncertain, and the sources and strategies to reduce exposure may not be known. Yet, a growing number of researchers consider it their ethical obligation to report the results back to their participants. In a project led by the

Epidemiologic studies and public health biomonitoring rely on chemical exposure measurements in blood, urine, and other tissues, and in personal environments, such as homes. For many chemicals, the health implications of individual results are uncertain, and the sources and strategies to reduce exposure may not be known. Yet, a growing number of researchers consider it their ethical obligation to report the results back to their participants. In a project led by the  Parent-child interaction therapy (PCIT) helps parents improve the quality of interaction with children who have behavior problems. The therapy trains parents to use effective dialogue acts when interacting with their children. Besides weekly coaching by therapists, the therapy relies on deliberate practice of skills by parents in their homes. We developed SpecialTime, a system that provides parents engaged in PCIT with automatic, real-time feedback on their dialogue act use. The results of a one-month pilot study with parents enrolled in PCIT suggest that automatic feedback on spoken dialogue acts is possible in PCIT, and that parents find the automatic feedback useful. We are currently conducting a larger evaluation of SpecialTime's impact on the effectiveness of the therapy. This work is co-led by

Parent-child interaction therapy (PCIT) helps parents improve the quality of interaction with children who have behavior problems. The therapy trains parents to use effective dialogue acts when interacting with their children. Besides weekly coaching by therapists, the therapy relies on deliberate practice of skills by parents in their homes. We developed SpecialTime, a system that provides parents engaged in PCIT with automatic, real-time feedback on their dialogue act use. The results of a one-month pilot study with parents enrolled in PCIT suggest that automatic feedback on spoken dialogue acts is possible in PCIT, and that parents find the automatic feedback useful. We are currently conducting a larger evaluation of SpecialTime's impact on the effectiveness of the therapy. This work is co-led by  Predictive text technology (e.g., the word suggestions displayed on most keyboards on mobile devices) was designed to improve the ease and efficiency of text entry. Our work demonstrates that predictive text can influence the content of what people write.

Predictive text technology (e.g., the word suggestions displayed on most keyboards on mobile devices) was designed to improve the ease and efficiency of text entry. Our work demonstrates that predictive text can influence the content of what people write.

Past work, including ours, has shown that well-designed adaptive user interfaces can substantially improve people's performance and that people prefer those interfaces to the standard one-size-fits-all designs. But do all people benefit from adaptive user interfaces equally, or are some systematic differences causing some people to reap greater benefit than others. Our first study, which utilized the results from the

Past work, including ours, has shown that well-designed adaptive user interfaces can substantially improve people's performance and that people prefer those interfaces to the standard one-size-fits-all designs. But do all people benefit from adaptive user interfaces equally, or are some systematic differences causing some people to reap greater benefit than others. Our first study, which utilized the results from the  Various online platforms for different domains--ranging from social development to product design--have emerged as a space where people can share their ideas and get inspired by ideas from other people all over the world. The promise of these platforms is that the mix of perspectives and expertise among the participants should allow creative solutions to emerge in ways unimaginable in the lone-innovator or small-group settings. In practice, however, existing online innovation platforms accumulate large numbers of mundane and repetitive ideas rarely leading to valuable breakthroughs.

Various online platforms for different domains--ranging from social development to product design--have emerged as a space where people can share their ideas and get inspired by ideas from other people all over the world. The promise of these platforms is that the mix of perspectives and expertise among the participants should allow creative solutions to emerge in ways unimaginable in the lone-innovator or small-group settings. In practice, however, existing online innovation platforms accumulate large numbers of mundane and repetitive ideas rarely leading to valuable breakthroughs.

Well-conducted lecture demonstrations of natural phenomena improve students' engagement, learning and retention of knowledge. Similarly, laboratory modules that allow for genuine exploration and discovery of relevant concepts can improve learning outcomes. These pedagogical techniques are used frequently in natural sciences and engineering to teach students about phenomena in the physical world. But how might we conduct a lecture demonstration to demonstrate impact of extraneous cognitive load on performance? How might we design a lab, in which students explore how adding decorations to visualizations impacts the comprehension and memorability of visualizations?

We are developing tools, content and procedures to bring experiential learning techniques to social science and design-related courses that teach concepts related to human perception, cognition and behavior. Specifically, we are working to develop software technologies to enable rapid, large-scale and ethical online human-subjects experimentation in undergraduate design-related courses.

See the

Well-conducted lecture demonstrations of natural phenomena improve students' engagement, learning and retention of knowledge. Similarly, laboratory modules that allow for genuine exploration and discovery of relevant concepts can improve learning outcomes. These pedagogical techniques are used frequently in natural sciences and engineering to teach students about phenomena in the physical world. But how might we conduct a lecture demonstration to demonstrate impact of extraneous cognitive load on performance? How might we design a lab, in which students explore how adding decorations to visualizations impacts the comprehension and memorability of visualizations?

We are developing tools, content and procedures to bring experiential learning techniques to social science and design-related courses that teach concepts related to human perception, cognition and behavior. Specifically, we are working to develop software technologies to enable rapid, large-scale and ethical online human-subjects experimentation in undergraduate design-related courses.

See the  Many excellent crowdsourcing and citizen science tools exist to support research, but only a tiny fraction of researchers make use of them. Why? Our interviews with 18 researchers across disciplines revealed a number of differences between the assumptions underlying existing crowd- and citizen-powered platforms and the prevalent research practices and norms.

Many excellent crowdsourcing and citizen science tools exist to support research, but only a tiny fraction of researchers make use of them. Why? Our interviews with 18 researchers across disciplines revealed a number of differences between the assumptions underlying existing crowd- and citizen-powered platforms and the prevalent research practices and norms.

We built on the information gap theory of curiosity to develop several interventions to motivate crowdworkers to persist longer on a task. Our experiment results show that curiosity interventions improve worker retention without degrading performance, and the magnitude of the effects are influenced by both the personal characteristics of the worker and the nature of the task.

We built on the information gap theory of curiosity to develop several interventions to motivate crowdworkers to persist longer on a task. Our experiment results show that curiosity interventions improve worker retention without degrading performance, and the magnitude of the effects are influenced by both the personal characteristics of the worker and the nature of the task.

Rich knowledge about the content of educational videos can be used to enable more effective and more enjoyable learning experiences. We are developing tools that leverage crowds of learners to collect rich meta data about educational videos as a byproduct of the learners' natural interactions with the videos. We are also developing tools and techniques that use these meta data to improve the learning experience for others.

Rich knowledge about the content of educational videos can be used to enable more effective and more enjoyable learning experiences. We are developing tools that leverage crowds of learners to collect rich meta data about educational videos as a byproduct of the learners' natural interactions with the videos. We are also developing tools and techniques that use these meta data to improve the learning experience for others.

Learners commonly make errors in reading Latin, because they do not fully understand the impact of Latin's grammatical structure--its morphology and syntax--on a sentence's meaning. Synthesizing instructional methods used for Latin and artificial programming languages, Ingenium visualizes the logical structure of grammar by making each word into a puzzle block, whose shape and color reflect the word's morphological forms and roles. See

Learners commonly make errors in reading Latin, because they do not fully understand the impact of Latin's grammatical structure--its morphology and syntax--on a sentence's meaning. Synthesizing instructional methods used for Latin and artificial programming languages, Ingenium visualizes the logical structure of grammar by making each word into a puzzle block, whose shape and color reflect the word's morphological forms and roles. See  Users make lasting judgments about a website's appeal within a split second of seeing it for the first time. This first impression is influential enough to later affect their opinion of a site's usability and trustworthiness. In this project, we aim to automatically adapt website aesthetics to users' various preferences in order to improve this first impression. As a first step, we are working on predicting what people find appealing, and how this is influenced by their demographic backgrounds.

Users make lasting judgments about a website's appeal within a split second of seeing it for the first time. This first impression is influential enough to later affect their opinion of a site's usability and trustworthiness. In this project, we aim to automatically adapt website aesthetics to users' various preferences in order to improve this first impression. As a first step, we are working on predicting what people find appealing, and how this is influenced by their demographic backgrounds.

Adaptive Click-and-Cross, an interaction technique for computer users

with impaired dexterity. This technique combines three "adaptive"

approaches that have appeared separately in previous literature:

adapting the user's abilities to the interface (i.e., by modifying the

way that the cursor works), adapting the user interface to the user's

abilities (i.e., by modifying the user interface through enlarging

items), and adapting the user interface to the user's task (i.e., by

moving frequently or recently used items to a convenient location).

Adaptive Click-and-Cross combines these three adaptations to minimize

each approach's shortcomings, selectively enlarging items predicted to

be useful to the user while employing a modified cursor to enable

access to smaller items.

Adaptive Click-and-Cross, an interaction technique for computer users

with impaired dexterity. This technique combines three "adaptive"

approaches that have appeared separately in previous literature:

adapting the user's abilities to the interface (i.e., by modifying the

way that the cursor works), adapting the user interface to the user's

abilities (i.e., by modifying the user interface through enlarging

items), and adapting the user interface to the user's task (i.e., by

moving frequently or recently used items to a convenient location).

Adaptive Click-and-Cross combines these three adaptations to minimize

each approach's shortcomings, selectively enlarging items predicted to

be useful to the user while employing a modified cursor to enable

access to smaller items.